As a Research Intern at Gade Autonomous Systems, I had the opportunity to implement the Human-Robot Interaction module on a NAO humanoid robot to promote linguistic and social learning in children with autism spectrum conditions.

Among other things, I programmed NAO to perform the Surya Namaskar, the traditional Indian practice of Sun salutation. The video starts a bit shaky but improves in a few seconds:

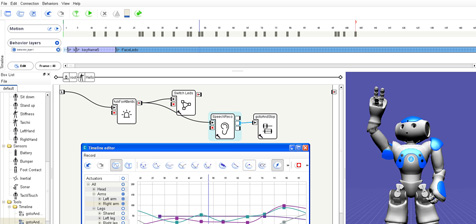

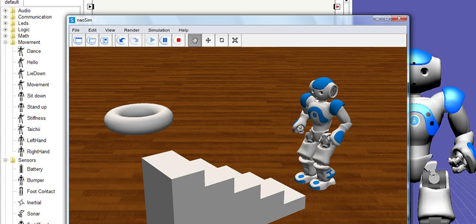

For development, I used Python for programming and Choreographe by Aldebaran Robotics running on a Ubuntu 11.10 machine. The behavior was simulated on NAOsim powered by Cogmation Robotics running remotely on a Windows 7 machine.

I utilized all the three methods for integrating motion to develop this particular routine, namely:

- The Timeline Editor

- The Recording Mode

- The Motion API (ALMotion)

Each of these methods have their own pros and cons. For example,

- Programming the exact actuator position values using the Motion API is time consuming but allows for unparalleled precision

- The timeline panel or the motion editor allows for defining frames quickly and specifying the animations in those frames

- The recording mode allows you to move NAO’s body to a certain position manually and record the actuator position values

By building this behavior, I learned about the well designed interface between the simulator (NAOsim) and the development suite (Choreographe and Telepathe). The number of times NAO fell on his face in the simulator highlighted the importance of extensive testing and simulation with in a physics-enabled environment before deployment in the wild. My understanding about the synchronous and asynchronous flows improved greatly using the box-based GUI and, eventually, I programmed the flows using the API as well.

An idea from my dad, and observing the locals practice their tradition inspired me to build this particular behavior into the initial Human-Robot Interaction module. Hope you enjoyed watching the video.

Hi Abhinav,

Very impressive work with the NAO!

Can you kindly share your Choreographe script and python code so that others can reproduce this great motion sequence ?

thanks

Limor

RoboSavvy.com

Abhinav,

This is just AWESOME!..

Keep up the good work and keep enriching the technical world 🙂